Team leader: Pierre Bénard - deputy : Fabien Lotte

We design capture, display and multimodal interaction systems, and we study human-machine interaction processes based on such systems. In addition, we develop 3D modeling and visualization techniques to provide fast communication between the real world and the digital 3D world. In particular, we explore approaches based on mixed reality (AR, VR), tangible interaction, brain-computer interfaces, and physiological interfaces.

These researches are notably conducted within the Manao and Potioc project-teams, joint with Inria:

- Manao : http://manao.inria.fr/

- Potioc : https://team.inria.fr/potioc/

Research axes:

Optics: Capture & Display (Manao)

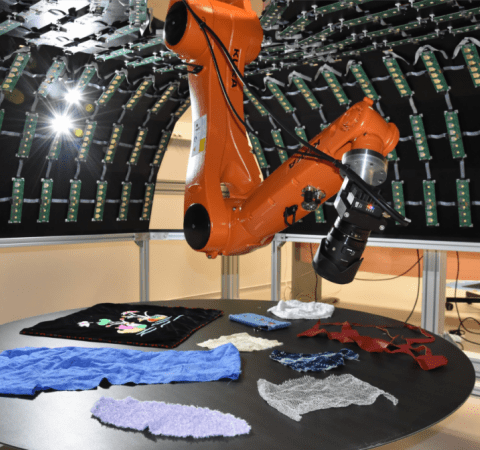

We investigate acquisition and display systems that combine optical instruments with digital processing. For the acquisition systems, we favor direct measurements of parameters for models and representations. For the display systems, we are interested in projection-based systems, mixed reality (VR, AR), and 3D displays. We follow a unified approach to design the systems as a whole, beyond a simple concatenation of optical instruments with digital processing, which opens new possibilities and may leverage the requirement of processing power, memory footprint, and cost.

Computer Graphics (Manao)

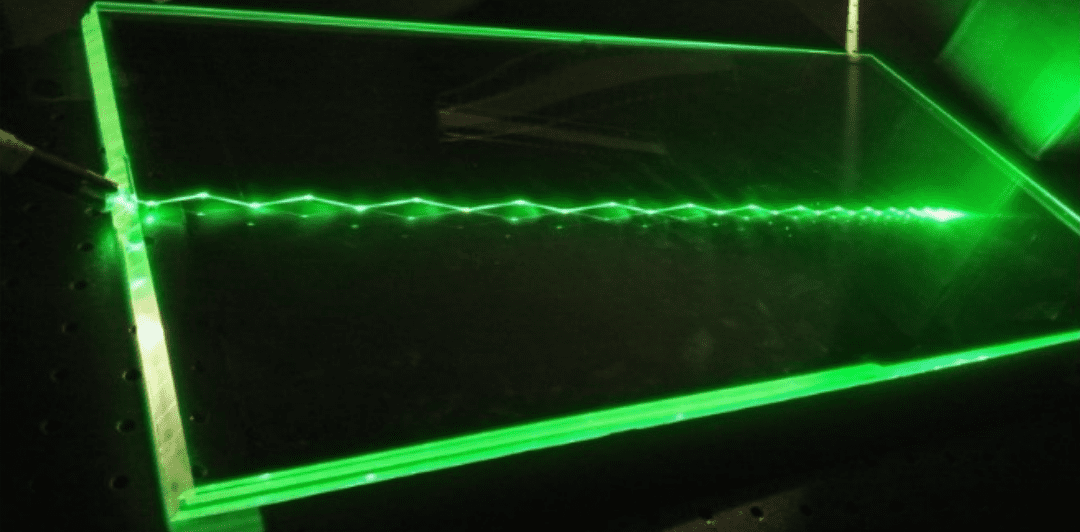

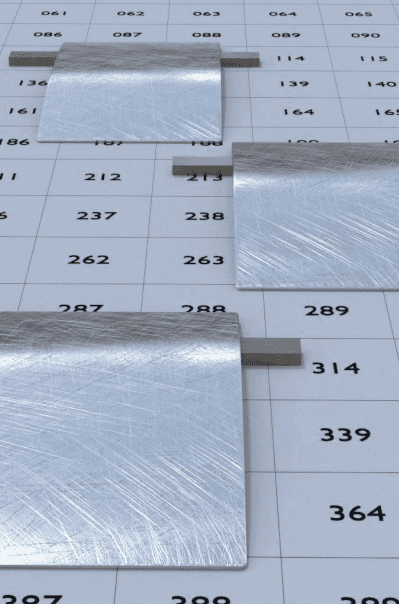

Our main goal is to study phenomena resulting from the interactions between the three components that describe light propagation and scattering in a 3D environment: light, shape, and matter. On the one hand, we develop theoretical tools and numerical models for analyzing and simulating the observed phenomena (e.g., diffraction, iridescence, hazy reflections). On the other hand, we are interested in methods to convey shape or material characteristics of objects in animated 3D scenes. For visualization purposes, we develop photorealistic and expressive rendering algorithms to offer to the final observer the most legible signal. In addition, we investigate the editing and modeling of shape and appearance with intuitive tools such as drawing or sculpture.

Human-Computer-Interaction in Mixed-Reality spaces (Potioc)

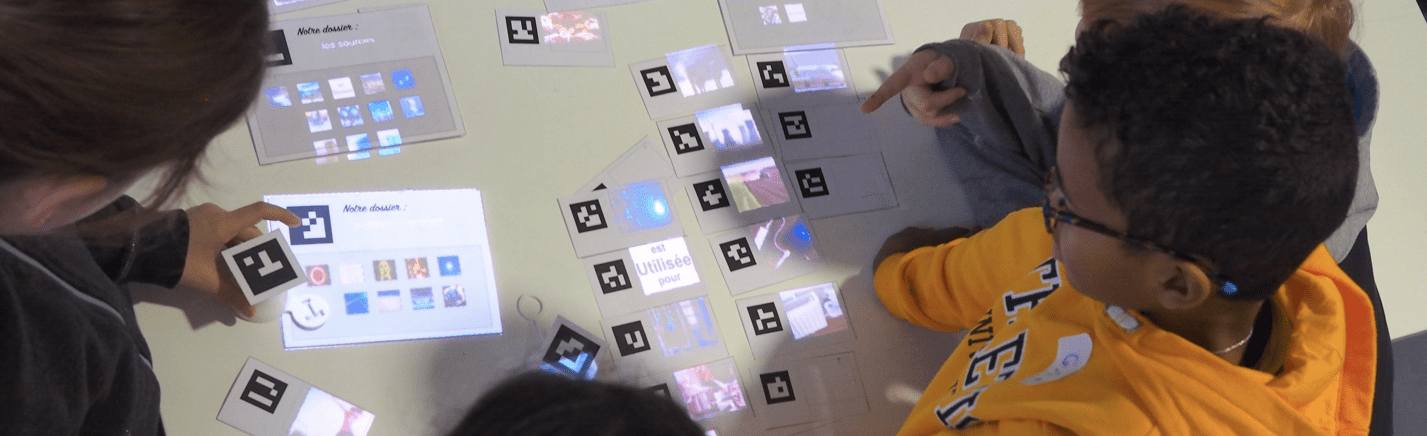

In Mixed-Reality interactive spaces (AR-VR), we explore interaction paradigms that encompass digital and/or physical objects. We are notably interested in hybrid environments that co-locate real and virtual spaces, and that base on tangible interaction. We also explore approaches that allow one to move from one space to the other. The main applicative domains areas are Education, Art, and Well-being.

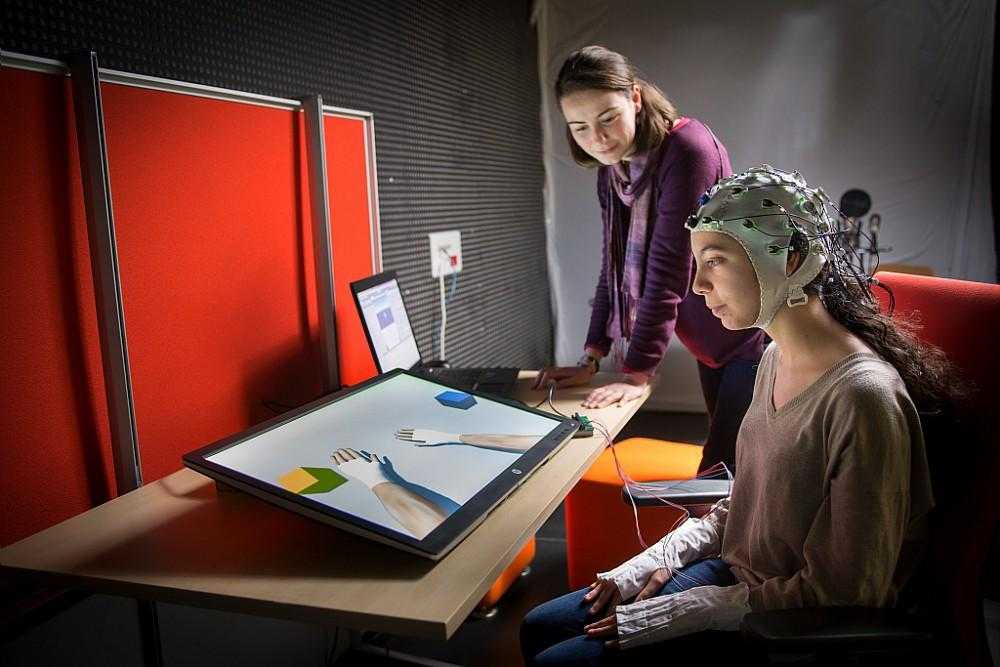

We explore new approaches in the domain of Brain-Computer Interfaces (BCI), i.e., systems enabling user to interact by means of brain activity only. We target BCI systems that are reliable and accessible to a large number of people. To do so, we work on brain signal processing algorithms as well as on understanding and improving the way we train our users to control these BCIs.

Immersive and Situated Visualization (Potioc)

With immersive visualisation, we explore new methods to remove the barriers between people and their data, and provide them with tools that are embodied, and thus, more intuitive and engaging. To this purpose, we explore the use of new technologies like augmented and virtual reality in order to immerse people in their data, but also the use of physical materials and 3D printer to make physical visualisations. With situated visualisations, we add as a constraint to display data directly in its own context in the real world and study the impact on its understanding.